Searching for scientific articles using an API (application programming interface) allows you to extract data from publisher platforms and databases. With an API, you can create programmatic searches of a citation database, extract statistical data, or query and manipulate your results within a Notebook.

In this module, we will learn to use the Crossref API to search for and analyze scientific articles.

What is Crossref?¶

Crossref is a non-profit organization that helps to provides access to scientific literature. Crossref “makes research outputs easy to find, cite, link, and assess” (https://

Crossref data on scientific publications essentially consists of three elements:

1) Metadata about a publication

2) A URL link to the article

3) A document identifier (doi)

At present Crossref contains information on 80 million scientific publications including articles, books and book chapters.

Installing Packages¶

First we will install packages that will be useful to us as we explore the Crossref API. When importing a package into Jupyter Notebook, we use the command pip install ... to download a package into Jupyter Notebook.

We then use the command import (package name) to use our new package in our code.

# just run this cell

!pip install habanero

#To use Crossref API in Python, we need to import the habanero package

import habanero

from habanero import Crossref

from collections import Counter # for easy counting

import ast # for string to dictionary conversion

import pandas as pd # for data manipulation

import numpy as np # for array manipulation

import matplotlib.pyplot as plt # for data visualization

%matplotlib inline Output

Requirement already satisfied: habanero in /opt/anaconda3/lib/python3.7/site-packages (0.7.4)

Requirement already satisfied: requests>=2.7.0 in /opt/anaconda3/lib/python3.7/site-packages (from habanero) (2.22.0)

Requirement already satisfied: tqdm in /opt/anaconda3/lib/python3.7/site-packages (from habanero) (4.42.1)

Requirement already satisfied: chardet<3.1.0,>=3.0.2 in /opt/anaconda3/lib/python3.7/site-packages (from requests>=2.7.0->habanero) (3.0.4)

Requirement already satisfied: idna<2.9,>=2.5 in /opt/anaconda3/lib/python3.7/site-packages (from requests>=2.7.0->habanero) (2.8)

Requirement already satisfied: certifi>=2017.4.17 in /opt/anaconda3/lib/python3.7/site-packages (from requests>=2.7.0->habanero) (2019.11.28)

Requirement already satisfied: urllib3!=1.25.0,!=1.25.1,<1.26,>=1.21.1 in /opt/anaconda3/lib/python3.7/site-packages (from requests>=2.7.0->habanero) (1.25.8)

WARNING: You are using pip version 20.3.1; however, version 21.1.2 is available.

You should consider upgrading via the '/opt/anaconda3/bin/python -m pip install --upgrade pip' command.

Analysis using Crossref API¶

In the sections below, we will walk through the basics of Crossref.

Data can be accessed using the python packages habanero, crossrefapi, and crossref-commons installed above. In this

, we’ll be focusing on the functionality of the habanero package.

Exploring a Crossref query¶

In the cells below, we walk through using Crossref and exploring the data it gives us. To create an object that takes on the Crossref identity, we assign it to the variable cr.

cr = Crossref() # create a crossref objectThe main function we will use is called cr.works(). This function takes in a query name. As an example, we’ll use the search term “permafrost”.

In order to save this output, we’ll assign it to the variable name permafrost.

# query for the term "permafrost"

permafrost = cr.works(query = "permafrost")If we inspect permafrost, we can see that it is a dictionary. A dictionary is a type of data structure that is indexed by keys.

type(permafrost)dictIn the cell below, try creating your own query for a different search term. Make sure to save it to a variable! For now, limit your search term to a single word.

#Example:

query1 = cr.works(query = "your query")Keys, Indexes, Metadata¶

A dictionary contains key-value pairs, and we can access the values by calling on the keys. In our permafrost dictionary, we can inspect the keys and take a look at the values that it contains.

list(permafrost.keys())['status', 'message-type', 'message-version', 'message']Above, we can see that there are 4 different keys in our permafrost dictionary. We will focus on the values for the message key. In the cell below, we are accessing the values by indexing into the dictionary by the given keys.

permafrost['message']This dictionary is nested, meaning that we can have keys that lead to values which are more dictionaries. It seems like the message contains most of the information we’re interested in. Below, take a look at the keys of the message component of the permafrost dictionary.

list(permafrost['message'].keys()) # keys of the permafrost message dictionary['facets', 'total-results', 'items', 'items-per-page', 'query']Just as we did before, we can inspect what information is contained for different keys of the dictionary. Let’s focus on total results first.

# This tells us the total number of results from our query

permafrost['message']['total-results']8218permafrost['message']['items-per-page'] # tells us how many items per page 20permafrost['message']['query'] # details about our query{'start-index': 0, 'search-terms': 'permafrost'}permafrost['message']['items'] # the items of our queryAbove, we can see that the items contains the majority of information about our query on permafrost. It contains a list of all of the results - we can check this with the type command we used earlier. Since we only are looking at the first page, our items list has only 20 items in it.

type(permafrost['message']['items'])listlen(permafrost['message']['items'])20Creating Tables¶

When our data exists in dictionaries, it’s a little hard to explore and manipulate. In order to tackle this, we’ll create a dataframe of the information so that we can access it more easily.

df_permafrost = pd.DataFrame(permafrost['message']['items'])

df_permafrost.head() # show the first 5 rowsThere are a bunch of columns in our table, and we can’t see all of them by scrolling. Below, we can look at a list of the columns instead.

df_permafrost.columnsIndex(['indexed', 'reference-count', 'publisher', 'issue', 'license',

'content-domain', 'short-container-title', 'DOI', 'type', 'created',

'page', 'source', 'is-referenced-by-count', 'title', 'prefix', 'volume',

'author', 'member', 'published-online', 'reference', 'container-title',

'language', 'link', 'deposited', 'score', 'issued', 'references-count',

'journal-issue', 'URL', 'relation', 'ISSN', 'issn-type', 'subject',

'publisher-location', 'isbn-type', 'published-print', 'update-policy',

'ISBN', 'assertion', 'short-title', 'subtitle', 'archive'],

dtype='object')Container-title seems to stand in for Journal Title. Let’s look at what’s in the container title column:

journal_titles = df_permafrost['container-title']

journal_titles0 [Permafrost and Periglacial Processes]

1 [Thawing Permafrost]

2 NaN

3 [Permafrost and Periglacial Processes]

4 [Permafrost and Periglacial Processes]

5 [Permafrost and Periglacial Processes]

6 [Permafrost and Periglacial Processes]

7 [Permafrost and Periglacial Processes]

8 [Permafrost and Periglacial Processes]

9 [Permafrost and Periglacial Processes]

10 [Permafrost and Periglacial Processes]

11 [Permafrost and Periglacial Processes]

12 [Permafrost and Periglacial Processes]

13 [Permafrost and Periglacial Processes]

14 [Permafrost and Periglacial Processes]

15 [Permafrost and Periglacial Processes]

16 [Permafrost and Periglacial Processes]

17 [Permafrost and Periglacial Processes]

18 [Permafrost and Periglacial Processes]

19 [Permafrost and Periglacial Processes]

Name: container-title, dtype: objectWe can do the same for the publisher column.

df_permafrost['publisher']0 Wiley

1 Springer International Publishing

2 Wiley

3 Wiley

4 Wiley

5 Wiley

6 Wiley

7 Wiley

8 Wiley

9 Wiley

10 Wiley

11 Wiley

12 Wiley

13 Wiley

14 Wiley

15 Wiley

16 Wiley

17 Wiley

18 Wiley

19 Wiley

Name: publisher, dtype: objectData Retrieval¶

We want to be able to retrieve more data from CrossRef. A single CrossRef query can return up to 1,000 results, and since our query has over 42,000 total results, we would need to make 43 queries. Remember at the beginning, we only had 20 results since we only grabbed the first page. The CrossRef API permits fetching results from multiple pages, so by setting cursor to * and cursor_max to 1000 we can grab 1000 queries at once. Querying all 42,000 results would take a long time, so for the purposes of this demonstration we are only using 1000. If you have more time, you could query more results, but be aware that it will take a long time.

# this cell will take a while to run

cr_permafrost = cr.works(query="permafrost", cursor = "*", cursor_max = 1000, progress_bar = True, sort="score", order="desc")100%|██████████| 49/49 [00:52<00:00, 1.07s/it]

We can check that we have the 1,000 messages, and indeed we do.

sum([len(k['message']['items']) for k in cr_permafrost])1000Remember before, when you created your own search query? Here, we’ll go through a few steps to get more results for your query. First, we’ll check how many total results your query has. Run the cell below to find out.

#query1['message']['total-results']If there are fewer than 1000 results, then set cursor_max to the number of results. If there are more than 1000 results, set cursor_max to 1000 so that the code won’t take too long to run. Be sure to fill in query with the same search term you used earlier.

#Your example

#query1 = cr.works(query="queryname", cursor = "*", cursor_max = 1000, progress_bar = True, sort="score", order="desc")In order to get all of the different results from our permafrost query, we need to extract them from cr_permafrost. Below, we create a list where each element is one result. cr_permafrost is a list consisting of pages, where we have 20 results per page. So cr_permafrost has 50 pages of 20 results each, giving us our 1000 results. In order to extract the info and have a list with each element be one results, we need to do some data manipulation.

#Confirm the number of pages

len(cr_permafrost)50In the cell below, we have 2 list comprehensions to get the results from our query. The first one creates a length 50 list that contains only the items instead of the entire dictionary, for each of the 50 pages. The second list comprehension extracts all of the items from the nested lists so that we have a single 1000 item list where each element is one result.

permafrost_items = [k['message']['items'] for k in cr_permafrost] # get items for all pages

permafrost_items = [item for sublist in permafrost_items for item in sublist] # restructure listWe’ll do the same for your query results so that you can have some fun plotting later in the notebook. Just uncomment and run the cell below.

#query_items = [k['message']['items'] for k in query1] # get items for all pages

#query_items = [item for sublist in query_items for item in sublist] # restructure listMore on Searches¶

You may be curious about the cr.works function that we’ve been using to get our data. This is the function that processes your topical “search”. There are different arguments, and we’ve seen how changing these arguments can help us get more search results than just the first 20.

Also, before we asked you to limit your search term to a single word. This is not a hard restriction - we simply did this for simplicity. You can query terms that are more specific and include more words - try it out below! Keep in mind that the more specific your search query, the fewer results you may see. Feel free to play around with it.

longerquery = cr.works(query = 'labrador retriever')

longerquery['message']['total-results']8137Visualization¶

Visualization is an important part of data analysis. Rather than looking at dictionaries and dataframes, we can see a visual summary of our data. In this section, we’ll go over different types of visualizations we can make based off of the different data we have. We’ll start by looking at the publishers. But first, we need to do some wrangling to get the data in a form that’s useful for us.

Grouping and Sorting by Journal¶

In an earlier section, we took our query results and converted them from a dictionary to a dataframe. Below, we will do this for our list of 1000 permafrost_items, and then we can use the dataframe methods of grouping and sorting to get the counts of articles published by each publisher. We can also group by other columns, such as type or language.

In the cell below, we create a dataframe containing all 1000 of our permafrost query results.

permafrost_df = pd.DataFrame(permafrost_items)

permafrost_df.head(10)While we are at it, we’ll convert your search query items into a data frame as well. Adapt the code in the cell to create a dataframe out of your query_items list.

# example

#query_df = pd.DataFrame(query_items)

#query_df.head()Counts¶

We can take the value_count to determine the number of times a given journal or work appears in our results. This will help us determine where research on our topic is commonly published.

journal = permafrost_df['container-title'].str[0].value_counts().rename_axis('titles').reset_index(name='counts')

journal#Try repeating this for your query to see the top 20 titles.

#query_journals = query_df['container-title'].str[0].value_counts().rename_axis('titles').reset_index(name='counts')

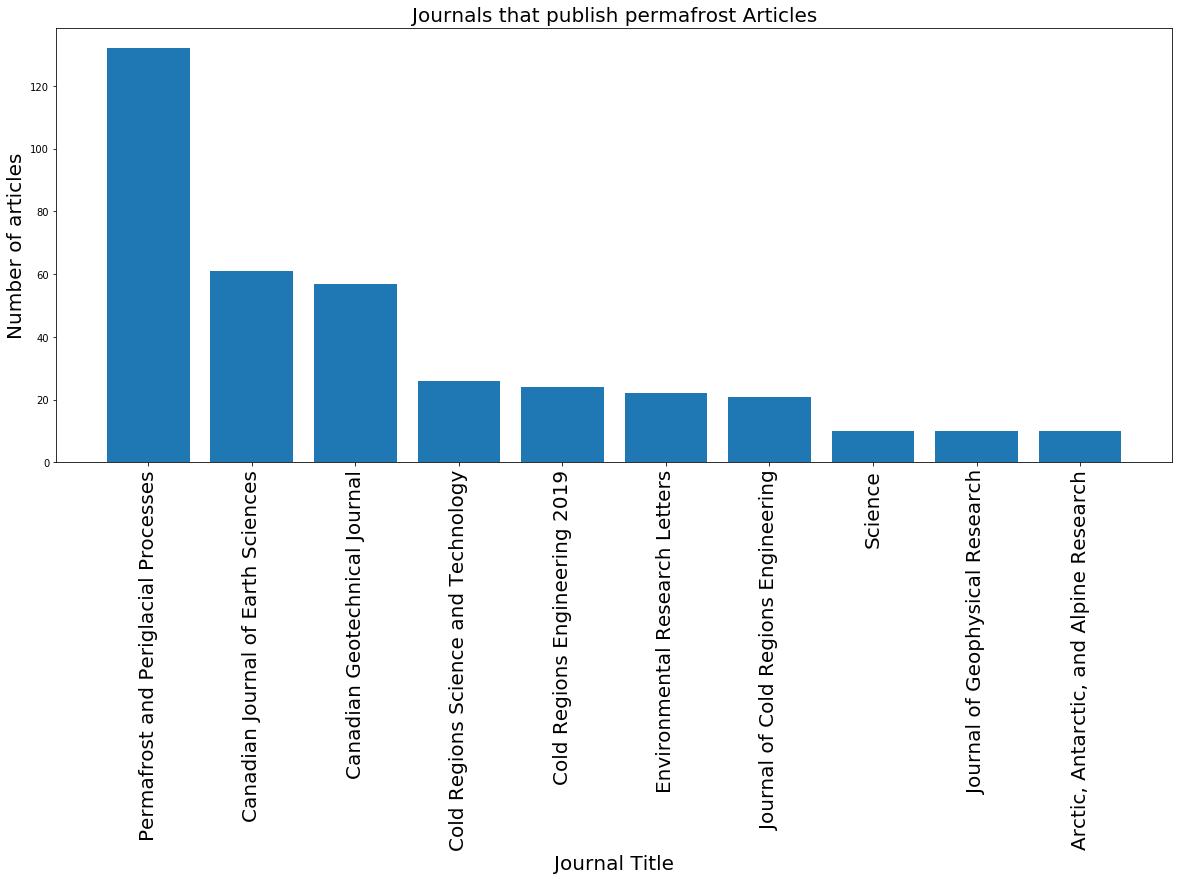

#query_journals.head(20)The journal dataframe above shows us that we have about 300 different titles, and a lot of them published only a few articles. The journal that appears most often published about 130 articles on our topic.

Bar Charts¶

Now that we have a sorted dataframe with journal titles and the number of articles they each published on permafrost, we can use this dataframe to plot the journals with the 10 highest counts.

permafrost_journals_top10 = journal.head(10) # get first 10 publishers

plt.figure(figsize=(20,8)) # set figure size

# create bar plot with publishers and counts

plt.bar(permafrost_journals_top10['titles'], permafrost_journals_top10['counts'])

# rotate publisher names for readability

plt.xticks(permafrost_journals_top10['titles'], rotation='vertical', fontsize = 20)

# labeling

plt.title('Journals that publish permafrost Articles', fontsize = 20) # set title

plt.xlabel('Journal Title', fontsize = 20) # set x label

plt.ylabel('Number of articles', fontsize = 20); # set y label

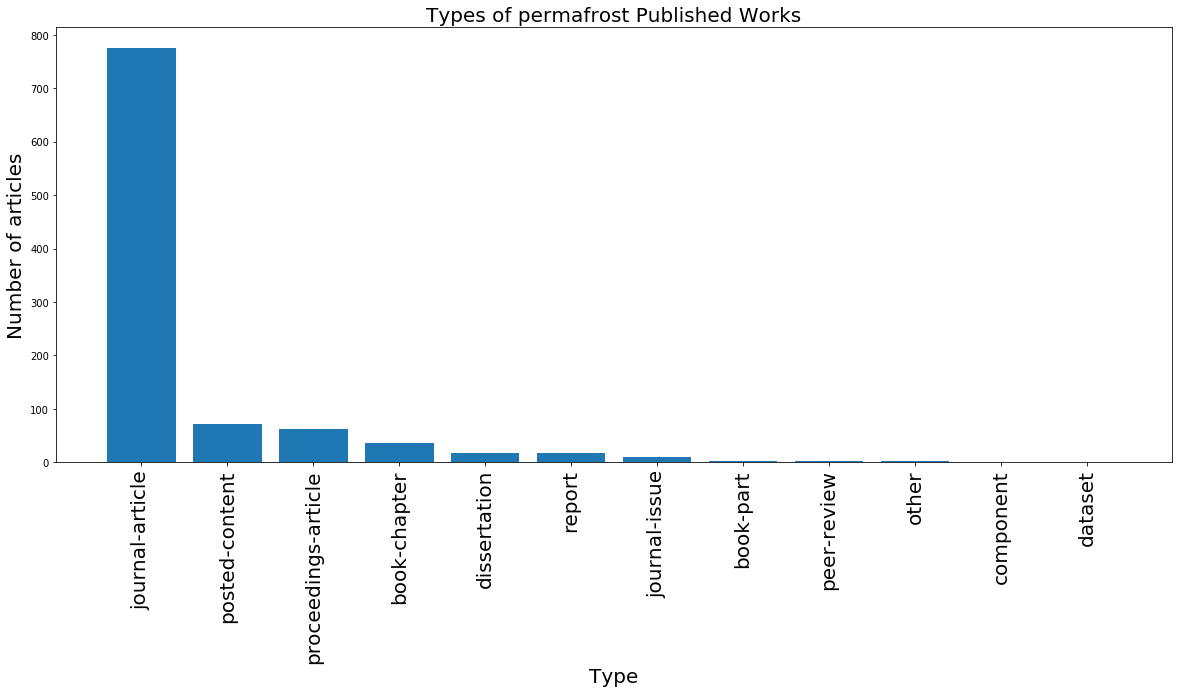

Before, we grouped by journal title. Keep in mind that we can group by other tags as well, such as type of article (type) or the language the article was published in (language). In the cell below, we use another method to group by either type or language and plot the resulting bar chart. Remember to sort your dataframe as well.

# types

permafrost_type_counts = permafrost_df.groupby('type').count()['indexed'].reset_index()

permafrost_type_counts = permafrost_type_counts.rename({'indexed':'count'}, axis = 1) # rename counts column

sorted_permafrost_type_counts = permafrost_type_counts.sort_values(by='count', ascending = False)

sorted_permafrost_type_counts# types

plt.figure(figsize=(20,8)) # set figure size

# create bar plot with publishers and counts

plt.bar(sorted_permafrost_type_counts['type'], sorted_permafrost_type_counts['count'])

# rotate publisher names for readability

plt.xticks(sorted_permafrost_type_counts['type'], rotation='vertical', fontsize = 20)

# labeling

plt.title('Types of permafrost Published Works', fontsize = 20) # set title

plt.xlabel('Type', fontsize = 20) # set x label

plt.ylabel('Number of articles', fontsize = 20); # set y label

If you’d like, you can use the cells below to create bar charts of publisher, language, or type for your own query by grouping and sorting your query_df dataframe! Feel free to reference the cells above for an example with permafrost_df.

# fill in the ...

#counts = permafrost_df.groupby('...').count()['indexed'].reset_index()

#counts = counts.rename({'indexed':'count'}, axis = 1) # rename counts column

#sorted_counts = counts.sort_values(by='count', ascending = False)

#sorted_counts# fill in the ...

#plt.figure(figsize=(20,8)) # set figure size

# create bar plot

#plt.bar(sorted_counts['...'], sorted_counts['count'])

# rotate labels for readability

#plt.xticks(sorted_counts['...'], rotation='vertical', fontsize = 20)

# labeling

#plt.title('...', fontsize = 20) # set title

#plt.xlabel('...', fontsize = 20) # set x label

#plt.ylabel('...', fontsize = 20); # set y labelCitations¶

In scientific literature, we are often also interested in the number of references a journal article has, or the number of times the article was cited. Let’s see which articles have been cited the most - we can do this by sorting our dataframe by the is-referenced-by-count column. Our dataframe has 45 columns, but we’ll just focus on a few. We’ll look at the title, is-referenced-by-count, publisher, and published-print (the date it was published in print).

most_cited = permafrost_df.sort_values('is-referenced-by-count', ascending=False)

most_cited = most_cited[['title', 'is-referenced-by-count', 'container-title', 'published-print', 'DOI']]

most_citedLet’s look at the top 10 most cited articles.

most_cited.head(10)What is the most cited article, and when was it published? Do you think the date it was published has an effect on the number of times the article was referenced?

Replace this line with your answer

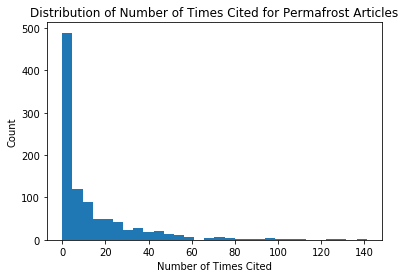

Visualizing the Number of Citations¶

When looking at our most_cited table, we can see that the most number of times an article was referenced was 181, and the least was 0. Using a type of visualization called a histogram, we can look at the distribution of time an article was referenced. We’ll do this in the cell below.

plt.hist(most_cited['is-referenced-by-count'], bins = 30) # generate histogram

# labeling

plt.title('Distribution of Number of Times Cited for Permafrost Articles') # set title

plt.xlabel('Number of Times Cited') # set x label

plt.ylabel('Count'); # set y label

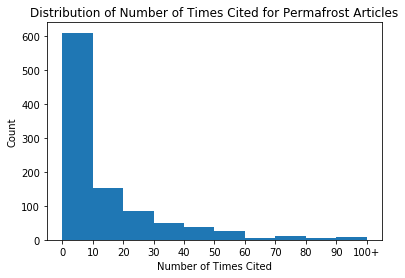

We can see that this histogram is very skewed, with the majority of articles being cited fewer than 50 times. We can adjust the bins in order to see a better distribution, by specifying the sizes of the bins.

plt.hist(most_cited['is-referenced-by-count'], bins = [0, 10, 20, 30, 40, 50, 60, 70, 80, 90, 100]) # generate histogram

# labeling

plt.title('Distribution of Number of Times Cited for Permafrost Articles') # set title

plt.xticks([0, 10, 20, 30, 40, 50, 60, 70, 80, 90, 100], [0, 10, 20, 30, 40, 50, 60, 70, 80, 90, '100+']) # set x axis tickmarks

plt.xlabel('Number of Times Cited') # set x label

plt.ylabel('Count'); # set y label

In the cells below, we’ve provided code to look at the number of times each article was cited for your query_df. In the following cell, try applying what you’ve just used to find the most cited article, the date it was published, and create a histogram showing the distribution for all articles.

#your example

#your_most_cited = query_df.sort_values('is-referenced-by-count', ascending=False)

#your_most_cited = your_most_cited[['title', 'is-referenced-by-count', 'container-title', 'published-print', 'DOI']]

#your_most_cited# fill in the ...

#plt.hist(...['is-referenced-by-count'], bins = ...) # generate histogram

# labeling

#plt.title('...') # set title

#plt.xlabel('...') # set x label

#plt.ylabel('...'); # set y labelQueries Over Time¶

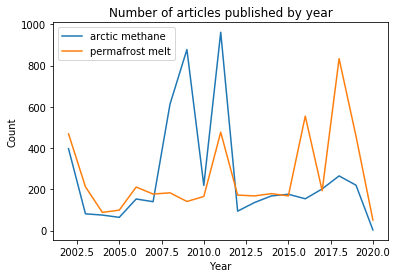

For our final visualization, we will look at 2 queries over time. To expand our searches to 2 words, we will look at queries for permafrost melt and arctic methane, and look at the number of articles that were published by year. Since running queries takes a long time, and ideally we want to have more years to compare over time, we pre-ran the queries and saved the results into a csv which we’ll load in the cell below.

The following 8 cells show the code we used to generate the csv files.

cr_permafrost = cr.works(query="permafrost melt", cursor = "*", cursor_max = 5000, progress_bar = True, sort="score", order="desc")100%|██████████| 249/249 [03:51<00:00, 1.08it/s]

permafrost_items = [k['message']['items'] for k in cr_permafrost] # get items for all pages

permafrost_items = [item for sublist in permafrost_items for item in sublist] # restructure listpermafrost_df = pd.DataFrame(permafrost_items)permafrost_df.to_csv('permafrost_melt_5000.csv')cr_arctic = cr.works(query="arctic methane", cursor = "*", cursor_max = 5000, progress_bar = True, sort="score", order="desc")100%|██████████| 249/249 [03:42<00:00, 1.12it/s]

arctic_items = [k['message']['items'] for k in cr_arctic] # get items for all pages

arctic_items = [item for sublist in arctic_items for item in sublist] # restructure listarctic_df = pd.DataFrame(arctic_items)arctic_df.to_csv('arctic_methane_5000.csv')#cr_query1 = cr.works(query="your first query", cursor = "*", cursor_max = 100, progress_bar = True, sort="score", order="desc")#query1_items = [k['message']['items'] for k in cr_query1] # get items for all pages

#query1_items = [item for sublist in query1_items for item in sublist] # restructure list#query1_df = pd.DataFrame(query1_items)#query1_df.to_csv('query1.csv')#cr_query2 = cr.works(query="your second query", cursor = "*", cursor_max = 100, progress_bar = True, sort="score", order="desc")#query2_items = [k['message']['items'] for k in cr_query2] # get items for all pages

#query2_items = [item for sublist in query2_items for item in sublist] # restructure list#query2_df = pd.DataFrame(query2_items)#query2_df.to_csv('query2.csv')Below, we’ll load in our query data and extract the year from the created column. Since we read in our data from a csv, our created column got converted to strings instead of dictionaries. We use the function ast.literal_eval() in order to convert the string back to a dictionary, and then access the year by indexing into date-parts.

arctic_df = pd.read_csv('arctic_methane_5000.csv')

permafrost_df = pd.read_csv('permafrost_melt_5000.csv')#query1_df = pd.read_csv('query1.csv')

#query2_df = pd.read_csv('query2.csv')arctic_years = [ast.literal_eval(k)['date-parts'][0][0] for k in arctic_df['created']] # extract year

permafrost_years = [ast.literal_eval(k)['date-parts'][0][0] for k in permafrost_df['created']] # extract year#query1_years = [ast.literal_eval(k)['date-parts'][0][0] for k in query1_df['created']] # extract year

#query2_years = [ast.literal_eval(k)['date-parts'][0][0] for k in query2_df['created']] # extract yearIn the cell below, we create a plot comparing the number of articles published for glacier melt and for permafrost melt per year. For year_counts_glacier and year_counts_permafrost, we use the python package Counter to return the counts of articles for each year. We sort by year so that our plot follows sequentially.

year_counts_arctic = dict(sorted((Counter(arctic_years)).items())) # create dictionary of glacier year counts

year_counts_permafrost = dict(sorted((Counter(permafrost_years)).items())) # create dictionary of permafrost year counts

plt.plot(list(year_counts_arctic.keys()),list(year_counts_arctic.values()), label = 'arctic methane')

plt.plot(list(year_counts_permafrost.keys()),list(year_counts_permafrost.values()), label = 'permafrost melt')

#plt.xticks(np.arange(2002, 2019))

plt.title('Number of articles published by year')

plt.xlabel('Year')

plt.ylabel('Count')

plt.legend();

Our search results show a spike in articles related to methane in the arctic in 2008 and 2011, and a later set of spikes for articles about permafrost melt between 2016-2019. We would have to dig in a bit more to understand the implications of these peaks, but they do give you a place to start when exploring trending topics.

If you are interested, you could repeat the process of getting queries and edit the above code to compare two search terms that you are interested about.

year_counts_query1 = dict(sorted((Counter(query1_years)).items())) # create dictionary of glacier year counts

year_counts_query2 = dict(sorted((Counter(query2_years)).items())) # create dictionary of permafrost year counts

plt.plot(list(year_counts_query1.keys()),list(year_counts_query1.values()), label = 'query1')

plt.plot(list(year_counts_query2.keys()),list(year_counts_query2.values()), label = 'query2')

#plt.xticks(np.arange(2002, 2019))

plt.title('Number of articles published by year')

plt.xlabel('Year')

plt.ylabel('Count')

plt.legend();Bibliography ¶

Paul Oldham - Adapted CrossRef R guide to Python. https://

poldham .github .io /abs /crossref .html #introduction

Notebook originally developed by: Keilyn Yuzuki, Anjali Unnithan

This chapter originated as a Data Science Module: http://